Autonomous cars are now everywhere on the roads. People are feeling more comfortable and safe while driving them.

Safety systems in self-driving cars are improving every day to reduce the number of accidents and pedestrians hit. Multiple functions are being introduced to help preventing damage to people on the streets.

But a new study released is showing some shocking results. AI systems used in autonomous cars are more likely to hit black people! The statement might seams racist, but the numbers speak.

Shocking Findings

The new study from Georgia Tech shows that the face and body recognition technology integrated into many pedestrian detection systems does not detect and react to darker-skinned persons as consistently as it does to lighter-skinned ones.

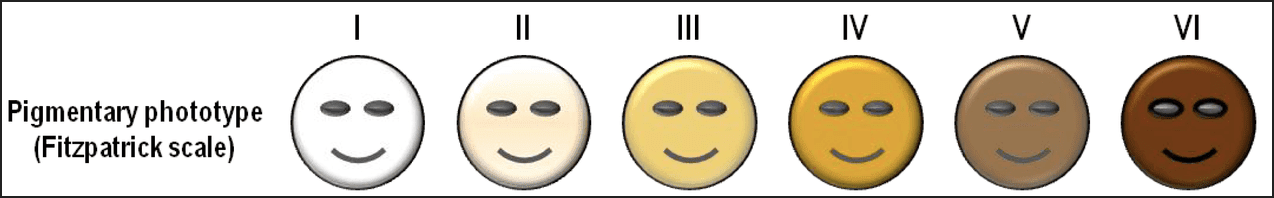

Using the Fitzpatrick scale shown below, when confronted with pictures of human beings with skin types four, five, and six, the algorithms were consistently between 4 and 10% less accurate. This means that 4 to 10% more dark-skinned people will be hit by autonomous cars if the problem is not solved.

Let’s think of it, systems and algorithms cannot be racist to hit more black people. AI systems “learn” and then perform, so when a person passes the street, the system will try to detect if this “object” is like the pictures it has learned about before or not.

The Real Problem

So why the results are differing depending on people’s color?

Because algorithmic systems “learn” from the examples they are fed, if they don’t acquire enough samples of, say, black women during the learning stage, they will struggle to recognize them when deployed.

Similarly, the authors of the self-driving car research point out that a number of factors are likely contributing to the difference in their situation. First, the object-detection models were primarily trained on light-skinned people. Second, the models did not focus enough emphasis on learning from the few samples of persons with dark skin that they did have.

Suggested Solution

According to the Georgia Tech team’s findings, we may be on the verge of a future in which a world filled with self-driving cars isn’t as safe for individuals with darker skin tones as it is for lighter-skinned pedestrians.

Automated cars may be growing smarter by the day, but as other studies before this one has shown, they are still not smart enough to be on our roads, despite the fact that they are currently on our roads and have killed pedestrians.

Fortunately, based on their research, they were able to determine what we need to do to avoid a future of prejudiced self-driving cars: start putting more photos of dark-skinned people in the data sets that systems train on, and place greater emphasis on correctly identifying such images.

I believe that it is more important to address the underlying racial causes of traffic violence that negatively affect communities of color — rather than thinking that a technology that we created is the main problem that needs to be solved.